The Need for Speed: Distributed Training Unlocks a New Era of AI Development

The AI landscape is a hyper-competitive arena, where the speed of innovation directly dictates market leadership. In this high-stakes race, distributed training has emerged as a game-changer, shattering previous limitations on model development and ushering in an era of unprecedented velocity.

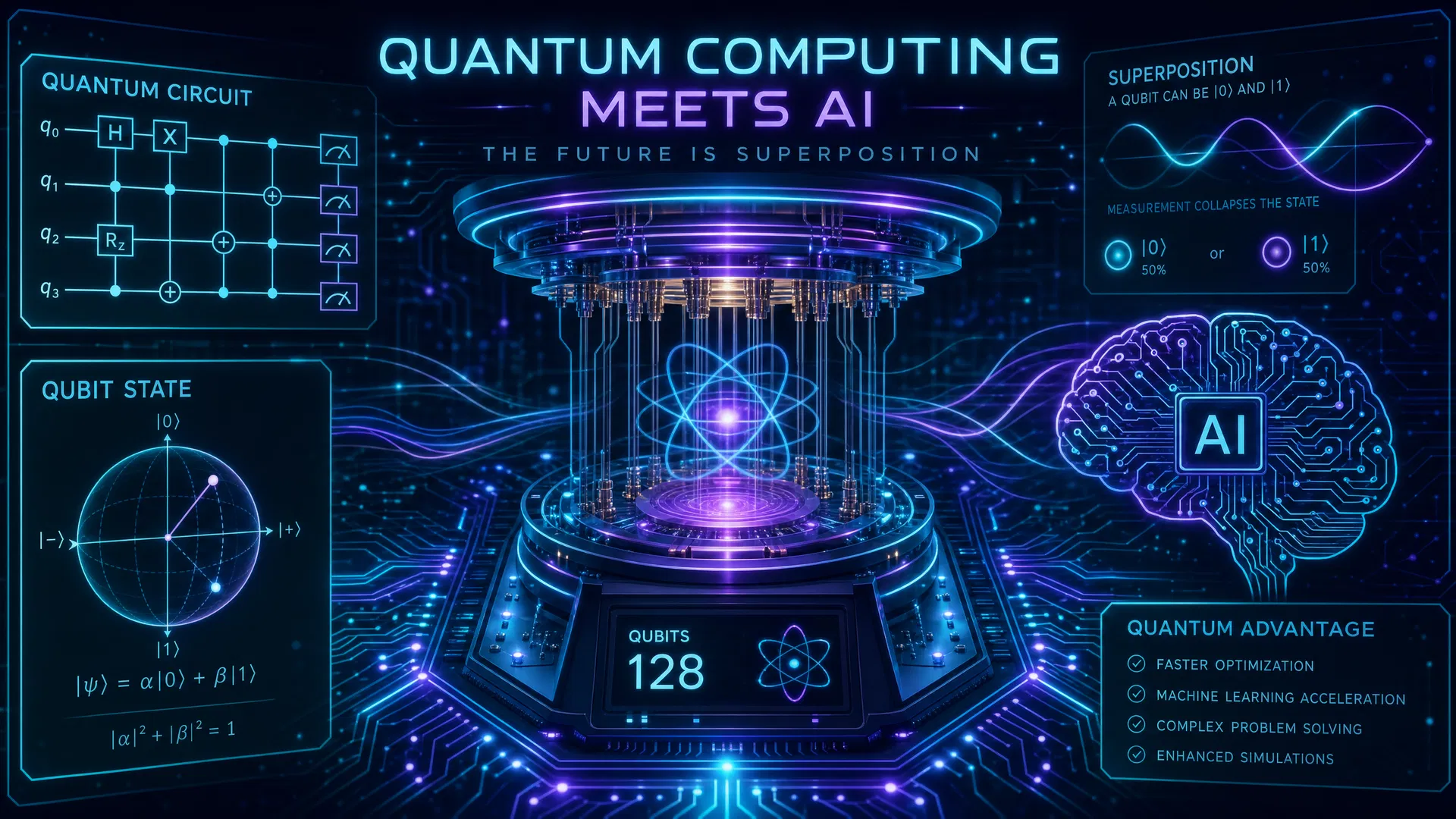

Traditionally, training large AI models on a single machine was a painstakingly slow and often impractical endeavor. Imagine trying to process petabytes of data for a cutting-edge large language model (LLM) or a sophisticated vision transformer on a lone GPU – it would take months, if not years. Distributed training, however, fundamentally alters this paradigm. By splitting the computational workload across multiple interconnected GPUs or even entire clusters of machines, it parallelizes the training process, drastically reducing the time required to develop and iterate on complex models.

Recent advancements in distributed training frameworks like PyTorch Distributed, TensorFlow Distributed, and NVIDIA's NCCL (NVIDIA Collective Communications Library) have made this powerful technique more accessible and efficient than ever. These tools handle the intricate orchestration of data distribution, gradient synchronization, and model parameter updates across numerous nodes, abstracting away much of the underlying complexity for developers. Furthermore, innovations in model parallelism (splitting the model itself across devices) and data parallelism (splitting the training data) allow for optimizing performance based on the specific model architecture and dataset size.

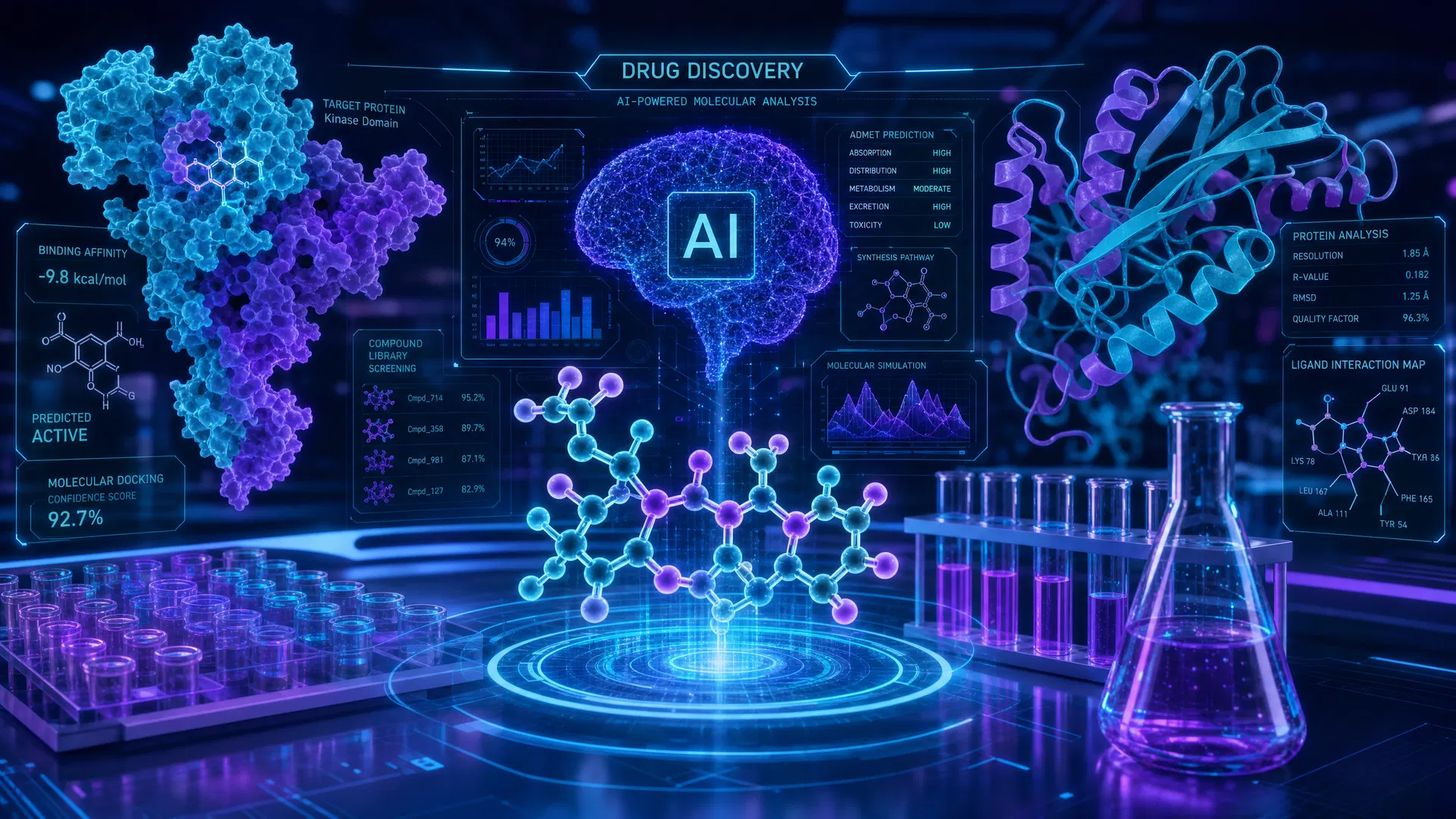

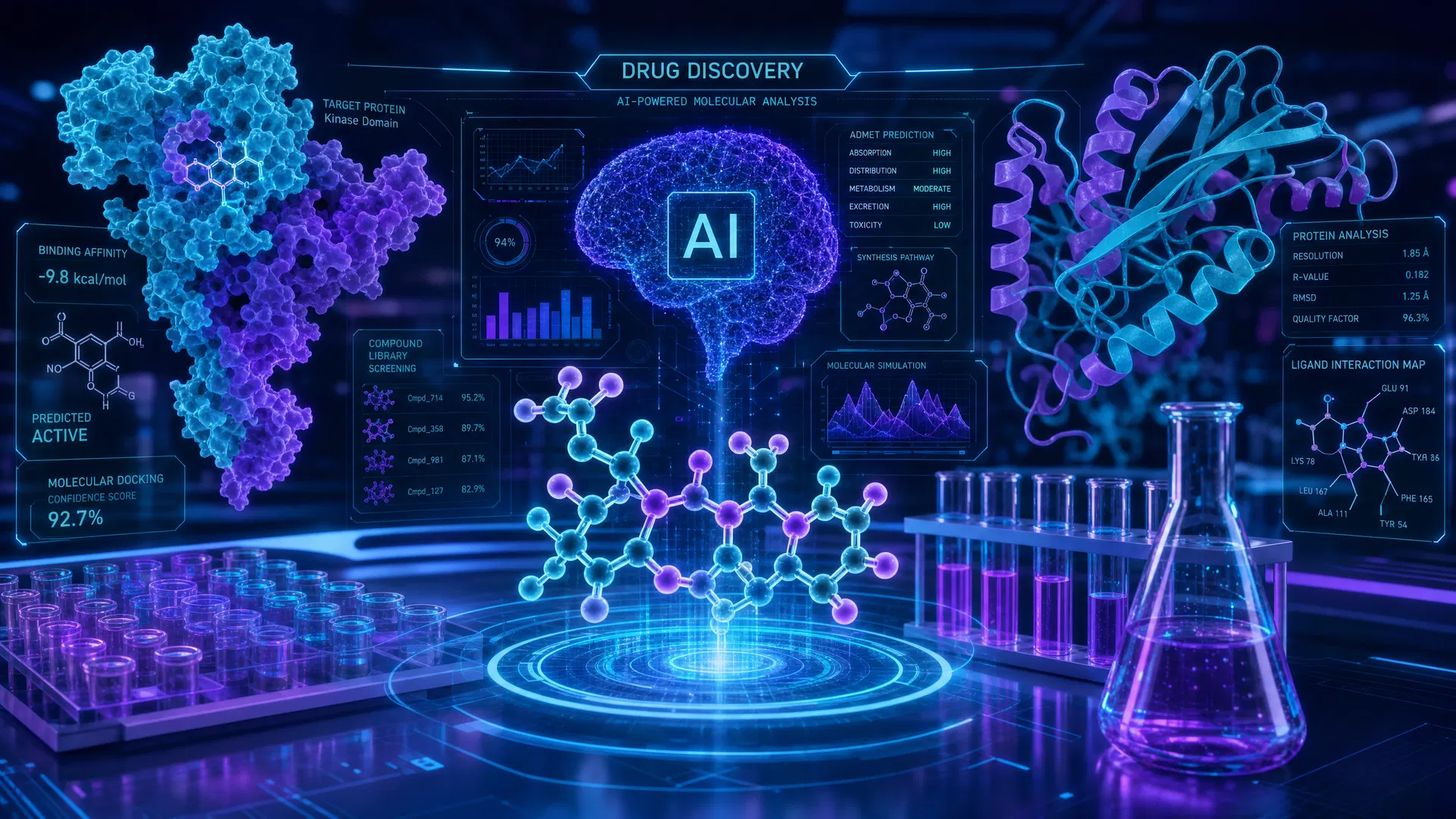

The implications for the industry are profound. Faster model development translates directly into accelerated research cycles, quicker deployment of AI-powered products and services, and a more agile response to evolving market demands. Companies can now experiment with larger, more complex architectures, explore diverse datasets, and fine-tune models with unparalleled speed. This is particularly crucial for fields like drug discovery, autonomous driving, and personalized medicine, where rapid iteration and performance gains can have life-altering impacts.

Looking ahead, distributed training is not just a performance booster; it's a foundational pillar for the future of AI. It paves the way for the development of truly multimodal AI systems, capable of understanding and generating information across various data types. It also democratizes access to state-of-the-art AI, as cloud providers continue to offer scalable distributed training infrastructure, allowing smaller teams to tackle ambitious projects. The future of AI development is undeniably distributed, promising a continuous surge of innovation and a dramatic acceleration in our ability to solve some of the world's most complex challenges.

Some links in this article are affiliate links. We may earn a small commission at no extra cost to you.

Resources & Tools Mentioned

Hugging Face

Open-source AI model hub

Midjourney

AI image generation platform

Perplexity AI

AI-powered search engine

Some links may be affiliate links. We may earn a commission at no extra cost to you.

Source Attribution

This article was originally published by AInewsnow.AI and has been enhanced and curated by AInewsnow AI.

You Might Also Like

Microsoft Reports Global AI Adoption Reaches 17.8% of Working-Age Population

Microsoft's latest Global AI Diffusion Report shows AI usage increased by 1.5 percentage points in Q1 2026, reaching 17.8% of the world's working-age population, though the North-South gap continues to widen.

Google DeepMind Announces AlphaFold 3: Predicting Protein Structures at Scale

Google DeepMind has unveiled AlphaFold 3, the next generation of its revolutionary protein structure prediction AI, capable of predicting structures for a wider range of biological molecules.

AI Skills Boom: Millions Learn Online

Online learning platforms are democratizing AI education, empowering millions to acquire crucial skills and reshaping industries by creating a diverse, AI-literate workforce. Discover how this digital revolution is building a more inclusive and dynamic future powered by artificial intelligence.

AI Masters Games: The Next Level of Play

AI agents are no longer just playing games; they're **mastering complex challenges like StarCraft II and Gran Turismo with superhuman performance**, signaling a profound leap for industries far beyond the digital arena. This isn't just about gaming glory; it's a **fundamental shift in AI's problem-solving capabilities**, poised to revolutionize everything from logistics to drug discovery.