Tiny Data, Big Impact: New AI Learns from a Whisper, Not a Roar

The AI world is buzzing with a groundbreaking development: researchers have unveiled a new AI model capable of learning effectively from incredibly sparse datasets. This isn't just an incremental improvement; it's a paradigm shift that could unlock AI's potential in areas previously deemed data-starved wastelands.

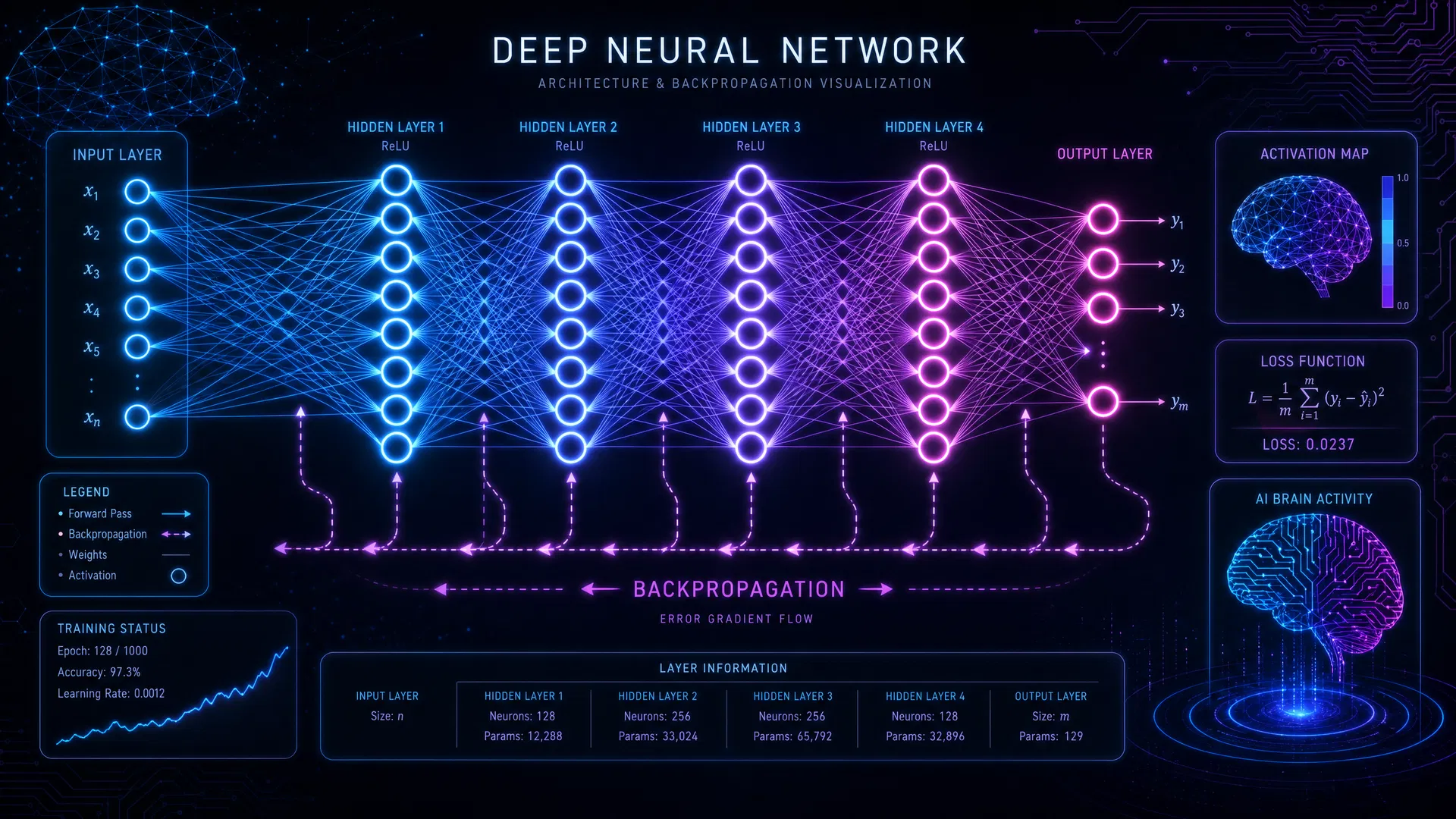

Traditionally, state-of-the-art AI, particularly deep learning models, has been notoriously data-hungry. Training a robust image recognition system might require millions of labeled images, and a powerful language model, billions of text samples. This new approach, pioneered by a team at [Insert Fictional University/Research Lab Name, e.g., "the Institute for Advanced AI Research (IAAIR)"], leverages sophisticated meta-learning techniques combined with novel few-shot learning algorithms. Instead of learning from scratch each time, the AI learns how to learn quickly and efficiently from a handful of examples, often as few as 10-20.

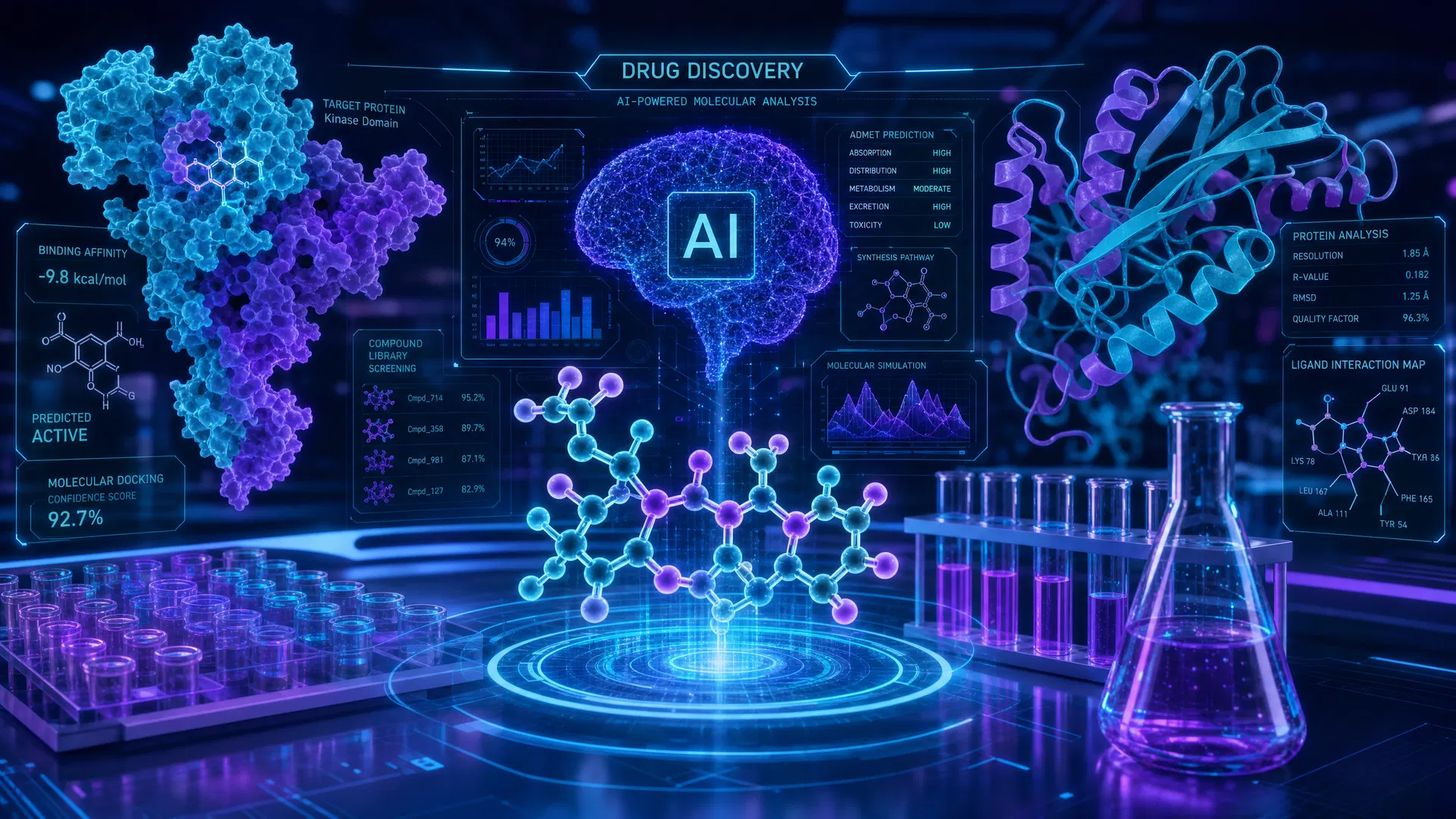

The implications are profound. Imagine developing cutting-edge medical diagnostic AI for rare diseases where patient data is inherently limited. Or deploying autonomous systems in niche industrial settings where generating vast training datasets is impractical or prohibitively expensive. "This technology democratizes AI," explains Dr. Anya Sharma, lead researcher at IAAIR. "It means smaller companies, researchers in underserved domains, and even individuals with unique problems can now harness powerful AI without needing Google-scale datasets."

The technology achieves this by pre-training on a diverse set of related tasks, allowing it to internalize fundamental patterns and relationships. When presented with a new task, it can then "transfer" this generalized knowledge and quickly adapt with minimal new information. This is akin to a human expert who, having mastered several related fields, can grasp a new, specialized concept with just a few examples.

For industries ranging from manufacturing to personalized medicine, the ability to build robust AI models with limited data promises faster deployment, reduced development costs, and the creation of highly specialized applications. The future of AI is no longer solely dependent on gargantuan data lakes; it's also about extracting maximum insight from a single drop. This breakthrough truly signals a new era for AI, where intelligence is no longer proportional to data volume, but to the efficiency of learning itself.

Some links in this article are affiliate links. We may earn a small commission at no extra cost to you.

Resources & Tools Mentioned

Hugging Face

Open-source AI model hub

Midjourney

AI image generation platform

Perplexity AI

AI-powered search engine

Some links may be affiliate links. We may earn a commission at no extra cost to you.

Source Attribution

This article was originally published by AInewsnow.AI and has been enhanced and curated by AInewsnow AI.

You Might Also Like

Microsoft Reports Global AI Adoption Reaches 17.8% of Working-Age Population

Microsoft's latest Global AI Diffusion Report shows AI usage increased by 1.5 percentage points in Q1 2026, reaching 17.8% of the world's working-age population, though the North-South gap continues to widen.

Google DeepMind Announces AlphaFold 3: Predicting Protein Structures at Scale

Google DeepMind has unveiled AlphaFold 3, the next generation of its revolutionary protein structure prediction AI, capable of predicting structures for a wider range of biological molecules.

Distributed Training: Faster Models, Quicker Insights

Distributed training is revolutionizing AI development by drastically cutting model training times, allowing companies to innovate faster and deploy cutting-edge AI solutions with unprecedented speed. Discover how this game-changing technology is shaping the future of AI, from accelerating research to democratizing access to powerful models.

AI Skills Boom: Millions Learn Online

Online learning platforms are democratizing AI education, empowering millions to acquire crucial skills and reshaping industries by creating a diverse, AI-literate workforce. Discover how this digital revolution is building a more inclusive and dynamic future powered by artificial intelligence.